Welcome to AI for Everyone.

This is The Trend where we break down one important shift in technology and what it means for builders and learners.

The future favors those who notice changes early.

Let’s dive in.

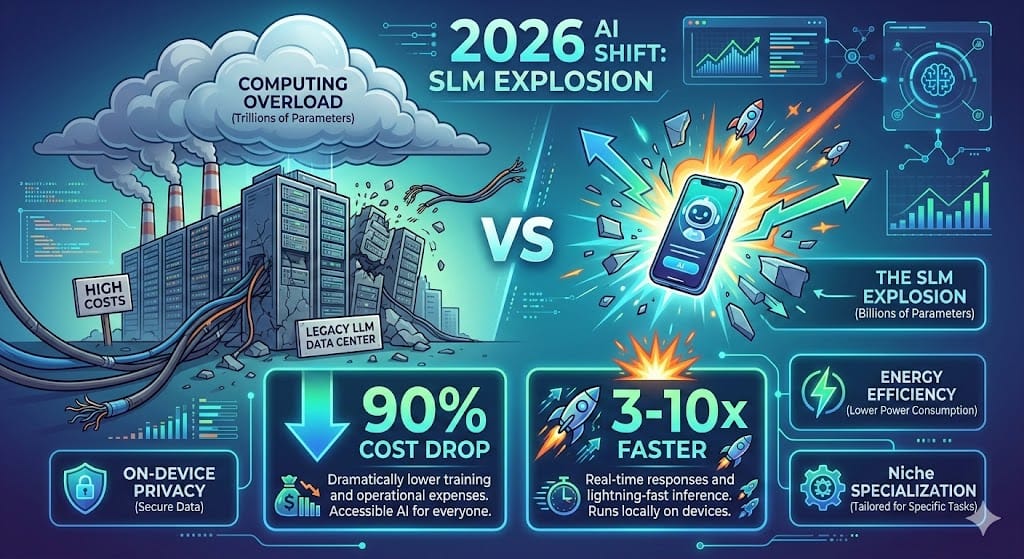

🚨 2025 was all about chasing bigger LLMs… but 2026 just called bluff.

Last year, we chased "bigger is better." In 2026, we’re chasing "smarter and faster." While massive LLMs still have their place, Small Language Models (SLMs) are the ones actually running the world's agents.

As NVIDIA recently proved: the future of Agentic AI isn’t in a massive data center—it’s in your pocket. Dell’s 2026 Edge predictions confirm it: "LLMs are legacy; SLMs are the engine."

The Stats that Matter:

90% Cost Drop: Running a fine-tuned 3B model is pennies compared to 70B+ API calls.

3-10x Latency Boost: Real-time responses with zero "cloud-ping" lag.

Privacy-First & Cost-Free: 100% local. Your data never leaves the device, and your API bill stays at $0.

SLM vs LLM 2026 – Tiny Wins Big

Keep reading to see why (and how) this changes everything. 🔥

What exactly defines an SLM in 2026?

An SLM isn't just a "diet LLM." It’s a model (typically 0.5B–10B parameters) trained on high-density, curated data - think "textbooks" instead of "the whole chaotic internet."

Phi-4 (Microsoft): At 3.8B parameters, it’s currently matching models 40x its size in Python coding and logical reasoning.

Qwen3-Small (Alibaba): The 0.6B variant is the most downloaded model on Hugging Face this quarter for a reason: it’s the king of mobile-native AI.

In 2026, Small Language Models (SLMs) like Phi-4 and Qwen3 are outperforming LLMs in cost, privacy, and edge deployment.

SLM Power in Your Pocket – 2026 Ready

Real Projects You Can Fork Today

GitHub Goldmines to Jump In Now (Stars exploding in 2026):

ro-ko/Awesome-SLM → Curated list of top SLM papers & models—your ultimate bookmark!

ai-in-pm/Small-Language-Model-SLM-Guide → Step-by-step guide to build your own SLM from scratch.

The One Insight You Need

By 2027, Gartner predicts task-specific models will outnumber general-purpose LLMs 3 to 1. We are moving from "AI that knows everything" to "AI that does one thing perfectly."

The future isn’t bigger. It’s specialized.

🚀 Ready to run your first model in under 60 seconds?

Bigger isn't better. Smarter is.

If you're tired of $1,200/month cloud bills, it's time to switch. Grab our 2026 SLM Starter Kit specifically for beginners. It includes:

✅ The 3 best "One-Click" AI installers for 2026.

✅ A list of "Low-RAM" models for older laptops.

✅ 5 prompts to test if your local AI is working.

That’s The Trend for SLMs.

Stay curious. Stay ahead.

Until next time :)