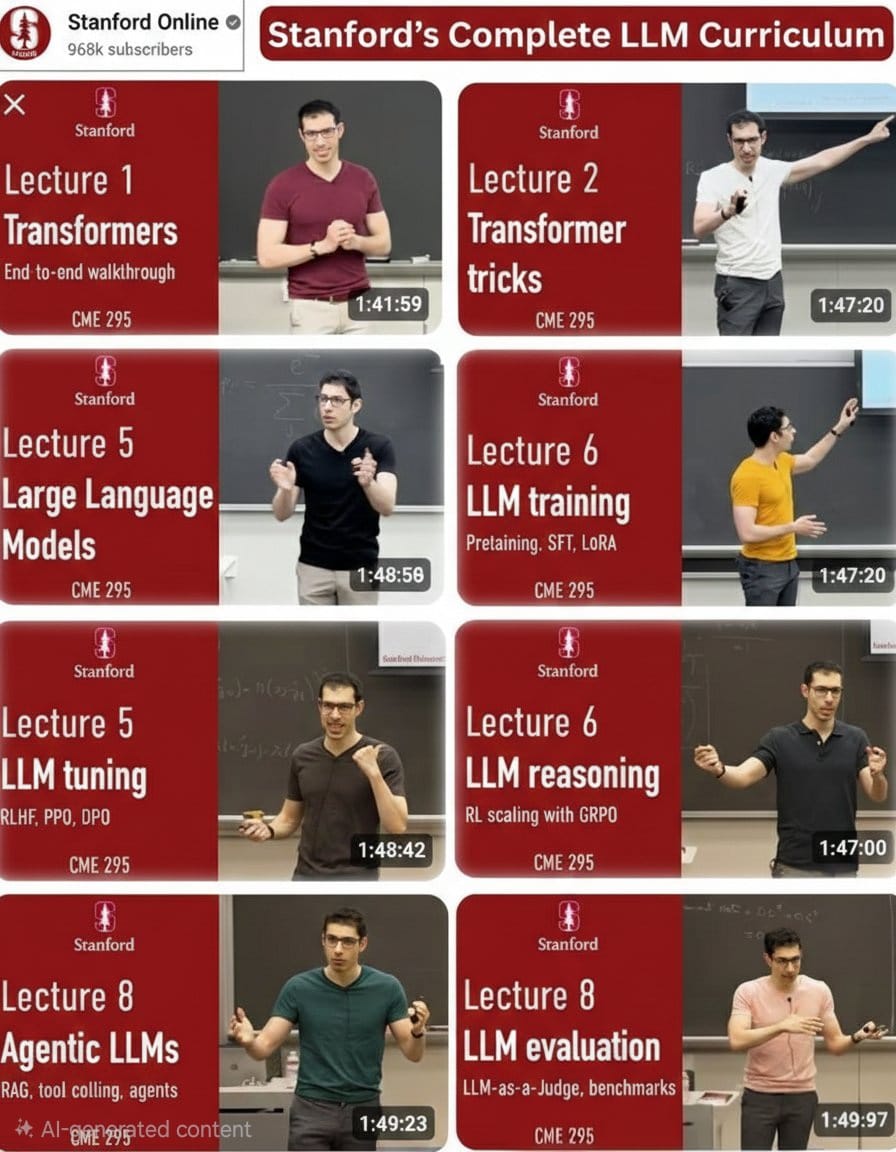

Stanford LLM Curriculum: From Prompt User to AI Engineer

Large Language Models (LLMs) are the foundation of modern AI systems such as ChatGPT, Claude, and many other advanced AI tools.

But most people only interact with these models as users.

If you want to go deeper and understand how these systems actually work, you need to learn the concepts behind:

Transformers

LLM training

Instruction tuning

AI reasoning

Agent systems

Model evaluation

This guide follows the structure of Stanford’s LLM curriculum and organizes the lectures into a clear step-by-step learning path.

By studying these modules in order, you can move from prompt user → AI engineer.

How to Use This Guide

Follow this study process while going through the lectures.

• Watch lectures in the given order

• Take handwritten or digital notes

• After each lecture, summarize the key ideas in your own words

• Try to implement at least one small experiment per section

• Do not skip steps — each concept builds on the previous one

Module 1: Transformers Fundamentals

What you’ll learn

What tokens are

Embeddings and vector representations

Attention mechanism

Self-attention

Encoder vs decoder architectures

Why transformers replaced RNNs and CNNs in NLP

Outcome

You’ll understand the core architecture behind modern AI systems.

Watch

Transformers Fundamentals

https://youtu.be/Ub3GoFaUcds?si=h_-8aszab0WLDlG8

Module 2: Transformer Tricks & Optimization

What you’ll learn

Positional encoding

Layer normalization

Residual connections

Scaling strategies

Training stability techniques

Outcome

You’ll understand how transformers scale to billions of parameters.

Watch

Transformer Tricks

https://youtu.be/yT84Y5zCnaA?si=NKDHfaRNw8KEK0P1

Module 3: From Transformers to Large Language Models

What you’ll learn

What makes an LLM different from a small model

Scaling laws

Emergent abilities

Pretraining objectives

Data distribution effects

Outcome

You’ll understand why large models behave differently from smaller ones.

Watch

Large Language Models

https://youtu.be/Q5baLehv5So?si=fqxbQrwyRFhrYAWX

Module 4: LLM Pretraining

What you’ll learn

Pretraining pipelines

Token prediction objectives

Dataset construction

Compute and scaling considerations

Where “intelligence” emerges

Outcome

You’ll understand how base models are created and trained.

Watch

LLM Training

https://youtu.be/VlA_jt_3Qc4?si=GcKuwAWWiAdJ5PWO

Module 5: Instruction Tuning & Alignment

What you’ll learn

Supervised fine-tuning (SFT)

RLHF (Reinforcement Learning from Human Feedback)

PPO and DPO

Alignment techniques

Why raw models behave differently from chat models

Outcome

You’ll understand how base models become helpful AI assistants.

Watch

Module 6: LLM Reasoning

What you’ll learn

Why models make reasoning mistakes

Chain-of-thought prompting

Reinforcement learning for reasoning

GRPO and scaling reasoning

Outcome

You’ll understand how reasoning improves through training and prompting.

Watch

LLM Reasoning

https://youtu.be/k5Fh-UgTuCo?si=ookqU35nxwfEp9pJ

Module 7: Agentic LLMs

What you’ll learn

Tool calling

Retrieval-Augmented Generation (RAG)

Planning agents

Memory systems

Autonomous workflows

Outcome

You’ll learn how LLMs evolve from text generators to action-taking systems.

Watch

Agentic LLMs

https://youtu.be/h-7S6HNq0Vg?si=b3i2zKiZi7MgE6Gk

Module 8: LLM Evaluation

What you’ll learn

Benchmarking methods

LLM-as-a-judge

Evaluation datasets

Measuring reasoning and reliability

Why demos can be misleading

Outcome

You’ll learn how to properly evaluate AI systems.

Watch

LLM Evaluation

https://youtu.be/8fNP4N46RRo?si=wShichCLbslEECDb

Module 9: What’s Next in LLMs

What you’ll learn

Future trends in AI

Multimodal models

Better reasoning systems

More efficient architectures

The direction of AI research

Outcome

You’ll understand where the future of AI and LLMs is heading.

Suggested 14-Day Study Plan

Day 1–2: Transformers fundamentals

Day 3: Transformer tricks

Day 4–5: Large language models

Day 6–7: LLM pretraining

Day 8–9: Instruction tuning & alignment

Day 10: LLM reasoning

Day 11–12: Agentic LLMs

Day 13: LLM evaluation

Day 14: Future trends and revision

Final Advice

To truly understand LLM systems:

• Rewatch difficult lectures

• Write summaries after each module

• Build small projects while learning

• Focus on concepts, not just tools

Master these ideas once, and every AI tool you use afterwards will make much more sense.